The biggest problem (after the problem with their data quality) I am having with Google Webmaster Tools is that you can’t export all the data for external analysis. Luckily the guys from the FMiner.com web scraping tool contacted me a few weeks ago to test their tool. The problem with Webmaster Tools is that you can’t use web based scrapers and all the other screen scraping software tools were not that good in the steps you need to take to get to the data within Webmaster Tools. The software is available for Windows and Mac OSX users.

FMiner is a classical screen scraping app, installed on your desktop. Since you need to emulate real browser behaviour, you need to install it on your desktop. There is no coding required and their interface is visual based which makes it possible to start scraping within minutes. Another possibility I like is to upload a set of keywords, to scrape internal search engine result pages for example, something that is missing in a lot of other tools. If you need to scrape a lot of accounts, this tool provides multi-browser crawling which decreases the time needed.

This tool can be used for a lot of scraping jobs, including Google SERPs, Facebook Graph search, downloading files & images and collecting e-mail addresses. And for the real heavy scrapers, they also have built in a captcha solving API system so if you want to pass captchas while scraping, no problem.

Below you can find an introduction to the tool, with one of their tutorial video’s about scraping IMDB.com:

More basic and advanced tutorials can be found on their website: Fminer tutorials. Their tutorials show you a range of simple and complex tasks and how to use their software to get the data you need.

Guide for Scraping Webmaster Tools data

The software is capable of dealing with JavaScript and AJAX, one of the main requirements to scrape data from within Google Webmaster Tools. Disclaimer: using this software is against the Google Terms of Service so use it on your own risk!

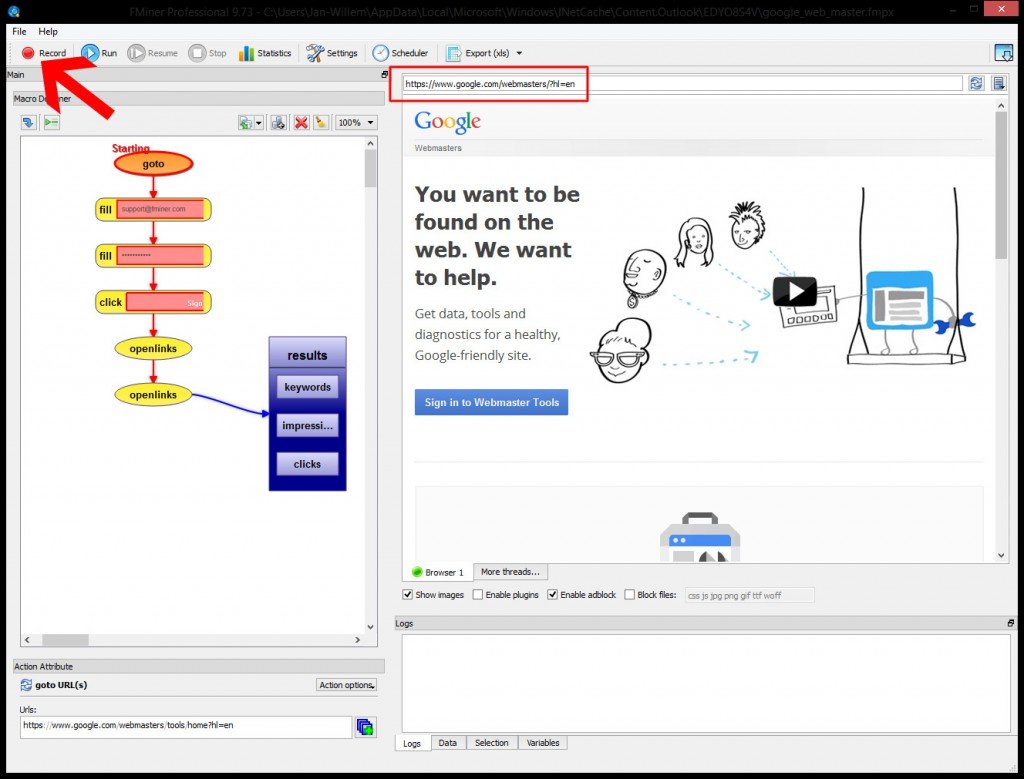

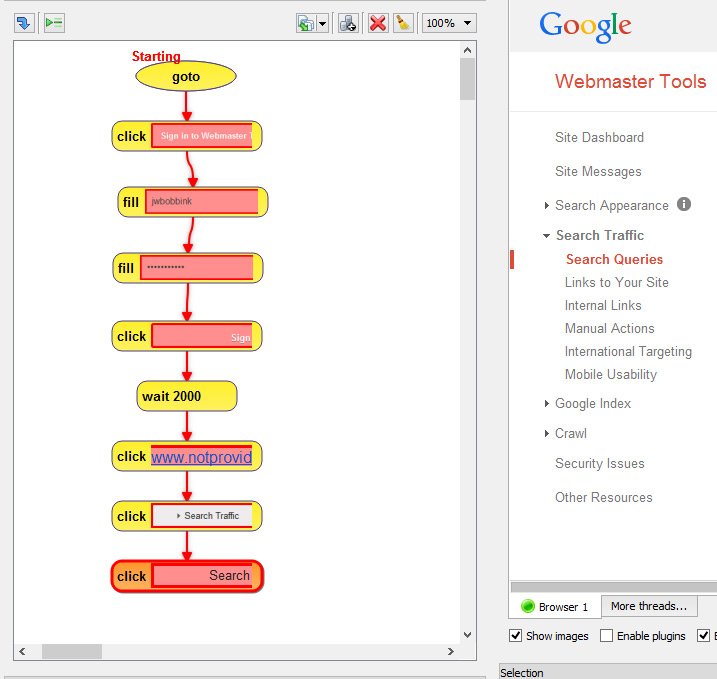

Step 1: The first challenge is to login into webmaster tools. After opening a new project, first browse to https://www.google.com/webmasters/ and select the Recording button in the upper left corner.

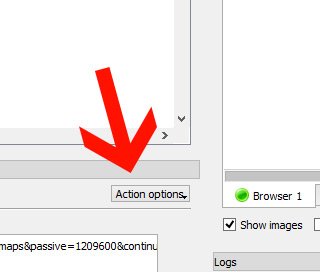

After browsing to this page, a goto action appears in the left panel. Click on this button and look for the “Action Options” button at the bottom of that panel. Tick the option Clear cookies before do it to avoid problems if you are already logged in for example.

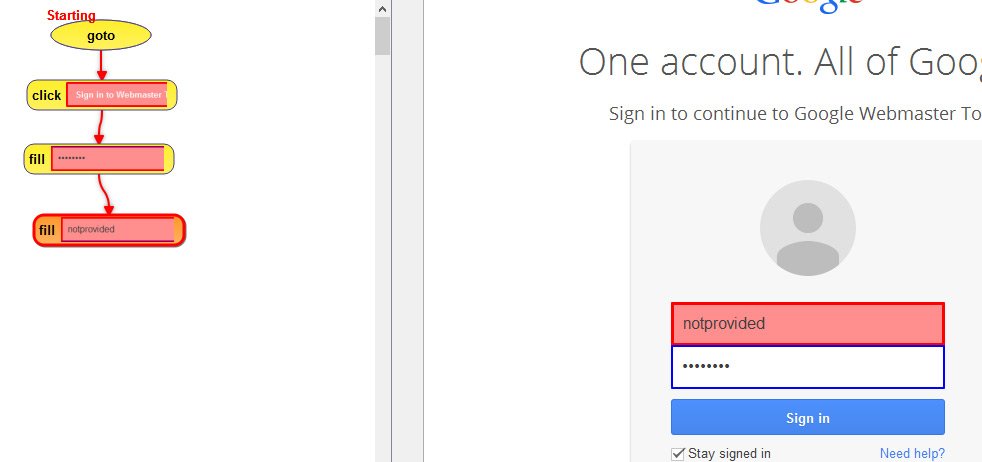

Step 2: Click the “Sign in Webmaster Tools” button. You will notice the Macro designer overview on the left registered a click as the first step.

Step 3: Fill in your Google username and password. In the designer panel you will see the two Fill actions emerging.

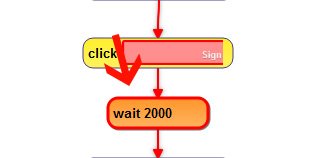

Step 4: After this step you should add some waiting time to be sure everything is fully loaded. Use the second button on the right side above the Macro Designer panel to add an action. 2000 milliseconds (2 seconds :)) will do the job.

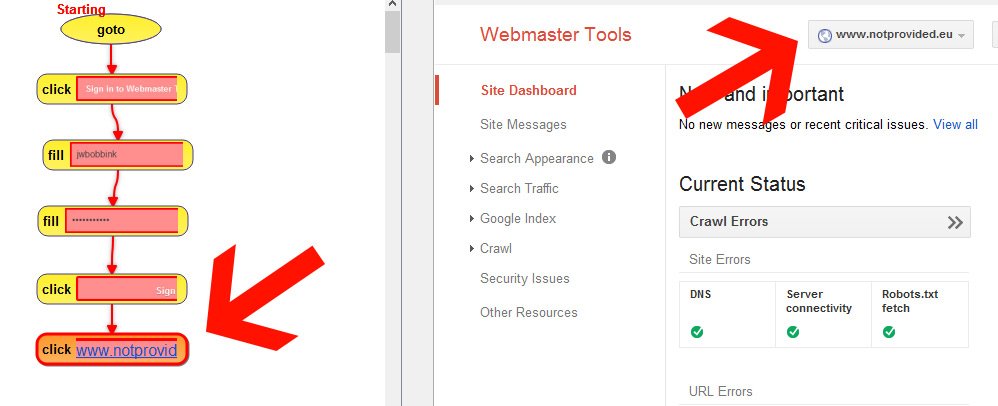

Step 5: Browse to the account of which you want to export the data from

Step 6: Browse to the specific pages of which you want the data scraped

Step 7:Scrape the data from the tables as shown in the video

Congratulations, now you are able to scrape data from Google Webmaster Tools 🙂

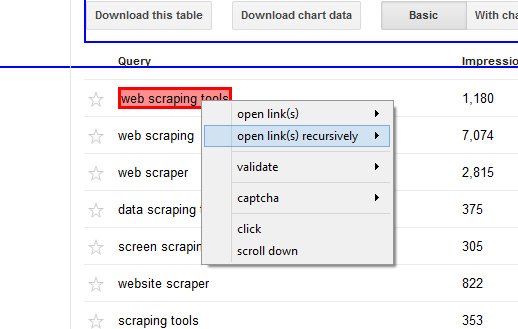

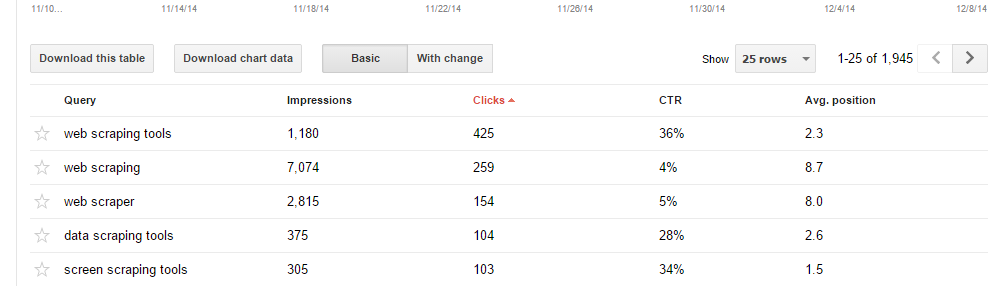

Step 8: One of the things I use it for is pulling the search query data per keyword, which you normally can’t export. To do that, you have to use a right mouse click on the keyword, which opens a menu with options. Go to open links recursively and select normal. This will loop through all the keywords.

Step 9: This video will show you how to make use of the pagination elements to loop through all the pages:

You can also download the following file, which has a predefined set of actions to login in WMT and download the keywords, impressions and clicks: google_webmaster_tools_login.fmpx. Open the file and update the login details by clicking on those action buttons and insert your own Google account details.

Automating and scheduling scrapers

For people that want to automate and regularly download the data, you can setup a Scheduler config and within the project settings you can setup the program to send an e-mail after completion of the crawl:

Comments

7 responses to “Scraping Webmaster Tools with FMiner”